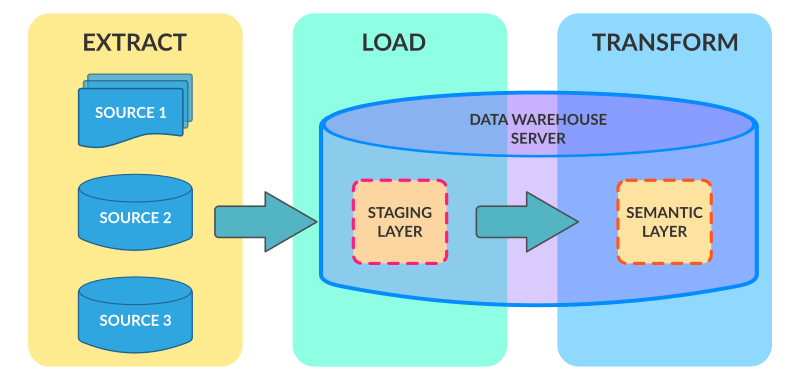

Performing calculations, translations, data analysis or summaries based on the raw data.Filtering, cleansing, de-duplicating, validating and authenticating the data.In this stage, a schema-on-write approach is employed, which applies the schema for the data using SQL, or transforms the data, prior to analysis. Typically, ELT takes place during business hours when traffic on the source systems and the data warehouse is at its peak and consumers are waiting to use the data for analysis or otherwise. In this step, the transformed data is moved from the staging area into a data storage area, such as a data warehouse or data lake.įor most organizations, the data loading process is automated, well-defined, continuous and batch-driven. That said, it is more typically used with unstructured data. The data set can consist of many data types and come from virtually any structured or unstructured source, including but not limited to: Extractĭuring data extraction, data is copied or exported from source locations to a staging area. More information can be found here (opens new window).ELT consists of three primary stages Extract, Load, and Transform. You should have set up an external stage on Snowflake with the necessary permissions.This recipe serves as a guide when building ELT recipes, and performs the basic functionality of extracting bulk data from cloud data sources and loading them into a database. The sample ELT recipe is a setup of how you can extract data from a cloud data source (Salesforce) into a data warehouse (Snowflake). The copy of the sample recipe can be found here (opens new window) Once the data is loaded, transformations occur within the target system. ELT focuses on loading the extracted data into a target system such as a data lake or distributed storage. Similar to ETL, ELT starts with the extraction phase, where data is extracted from various sources. Final loading of the transformed output into a data warehouse.

Used Load Data into BigQuery (opens new window) to load the transformed data into a Data Warehouse.

This recipe does not require any additional steps behind the scenes. This recipe serves as a general guide when building ETL recipes, and performs basic transformations on data extracted from an on prem data source before loading them into a data warehouse. Additionally, Workato file streaming capabilities allows you to transfer data without having to worry about time or memory constraints. The sample ETL recipe is a setup of how you can extract data from an on-prem data source ( SQL Server (opens new window)), merge it together with a product catalog stored in Workato FileStorage (opens new window) and load this transformed output into a data warehouse ( BigQuery (opens new window)). # Sample ETL RecipeĪ copy of the sample recipe can be found here (opens new window) This raw data is then subjected to a transformation phase (cleansing, filtering, etc.) and finally loaded into a target system, typically a data warehouse. # Extract, Transform, and Load (ETL)ĮTL begins with the extraction phase, where data is sourced from multiple heterogeneous sources, including databases, files, APIs, and web services. Batch processing is restricted by batch sizes and memory constraints, and are generally less suitable in the context of ETL/ELT. Bulk processing gives you the ability to process large amounts of data in a single job, especially suited for ETL/ELT. # Bulk vs Batchīulk/Batch actions/triggers are available throughout Workato. This guide will take you through sample recipes with explanations, that represent practical use cases of ELT/ETL.

Extract, Transform, and Load (ETL) and Extract, Load, and Transform (ELT) are processes used in data integration and data warehousing to extract, transform, and load data from various sources into a target destination, such as a data warehouse or a data lake.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed